Rethinking FCR: Why the AI-Era Contact Center Still Needs a Human Touch

AI can raise FCR, but only if you measure resolution the way customers feel it and design clean handoffs when automation hits edge cases. Here’s a practical FCR playbook for AI-powered contact centers.

.png)

Recently I published an article on the optimal CX KPIs for the AI era, and why old standbys like AHT and deflection can miss what customers actually feel. In that piece, I called First Contact Resolution (FCR) the north-star metric.

This follow-up is about the part most teams struggle with: what it takes to protect FCR when AI is now part of the journey.

If your contact center is investing in AI agents, agent assist, or intelligent routing, you need an FCR strategy that still protects the one thing customers care about most: “Did you actually fix my problem?”

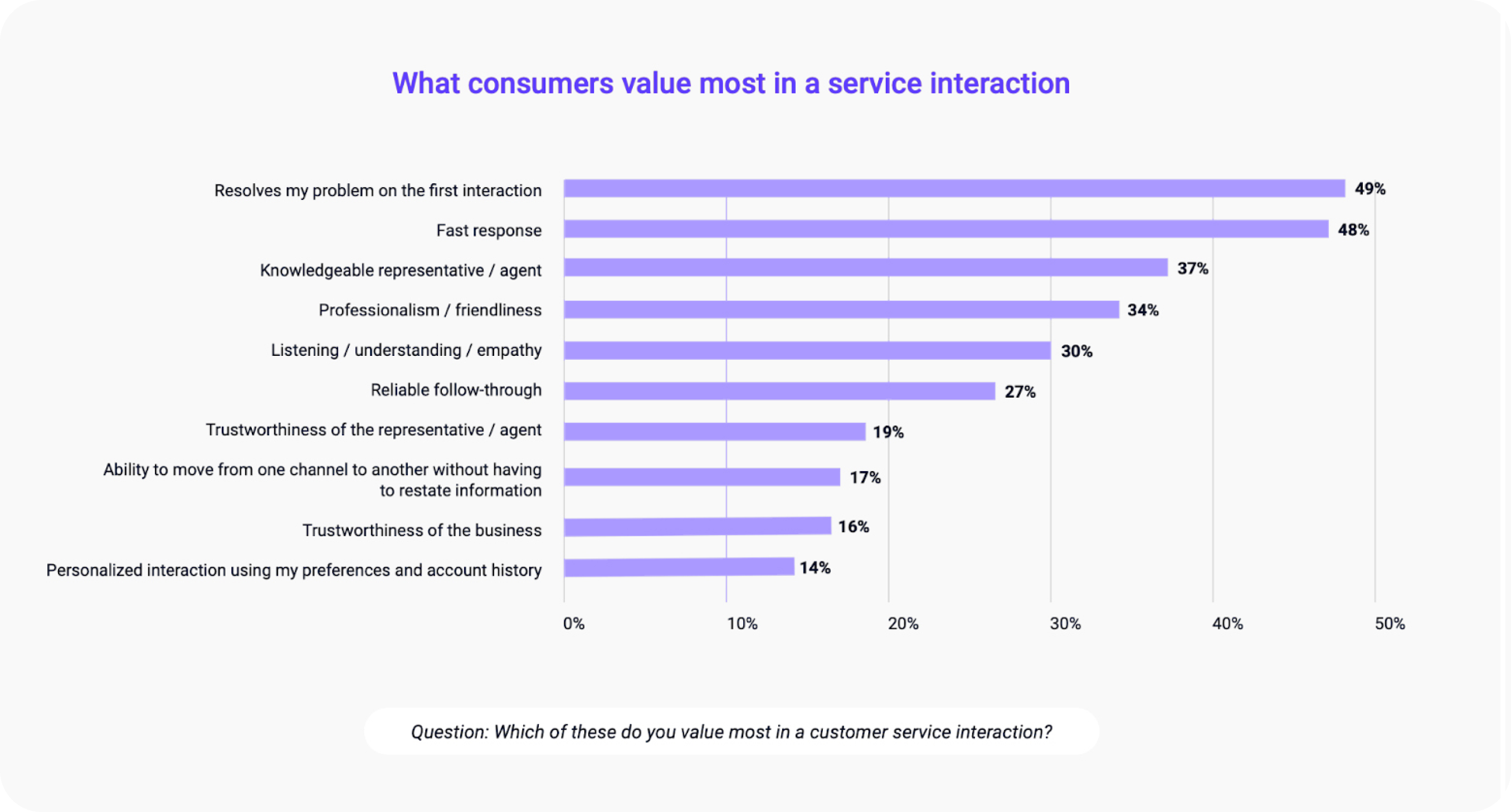

In a Genesys consumer survey, “resolve my problem on the first interaction” ranked #1, ahead of “fast response.” Genesys also found a sharp disconnect: consumers rank FCR #1, but CX leaders rank it #9, and only 32% say they even track it!

That gap is where a lot of AI disappointment comes from. Teams invest in automation, see lower agent volume, and call it success. Meanwhile, customers are still coming back because the issue wasn’t actually resolved.

This post is about fixing that. Not by rejecting AI, but by designing a hybrid model where AI improves first-touch accuracy, and humans protect trust when it matters.

The enduring importance of First Contact Resolution

FCR measures whether a customer’s issue is fully resolved in the first interaction without transfers, callbacks, or follow-ups. It’s one of the cleanest signals you have that customers are getting real closure.

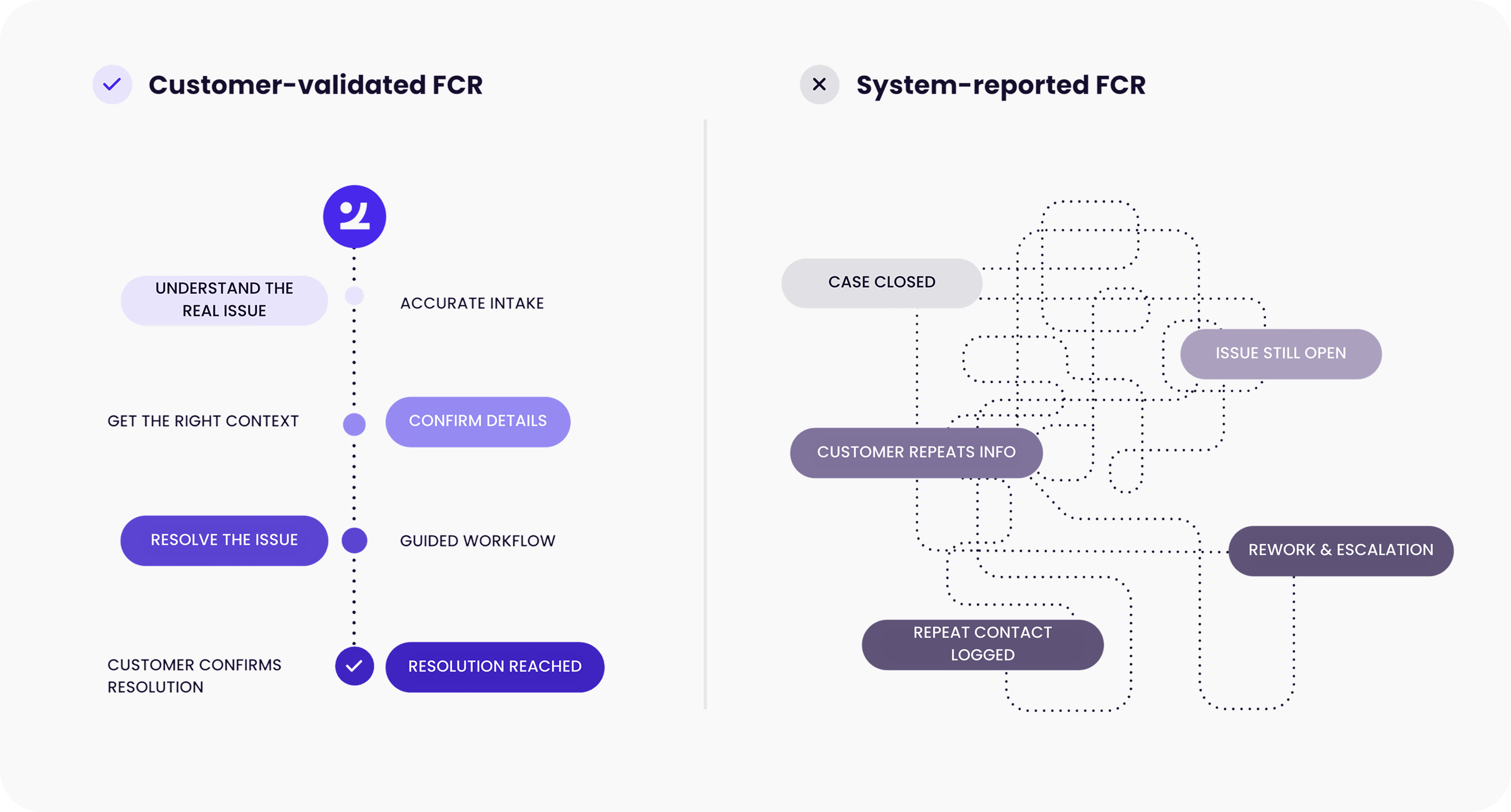

A lot of organizations end up measuring FCR as “one and done” from the system’s point of view:

- The chat ended

- The ticket was closed

- The bot contained the interaction

- The customer didn’t reach an agent

Those are point-in-time metrics. They’re easy to count. And in an AI era, they often look great.

However, FCR isn’t about whether the interaction ended. It’s about whether the problem stayed solved.

When teams treat FCR as a contact metric, they accidentally reward the wrong behavior: faster endings, higher containment, fewer handoffs. None of those guarantees that the customer got closure.

Real FCR shows up in what happens next:

- Strong FCR: customers get closure and don’t come back.

- Weak FCR: the work multiplies, context gets lost, customers repeat themselves, escalations increase, and repeat contacts creep up later.

That’s the trap: containment can look green while true resolution is failing. The system marks “resolved” while the customer is already dialing back.

Why does the disconnect happen

In real operations, this gap usually comes down to three things:

1. FCR is hard to see in the moment

Most teams don’t have clean, end-to-end visibility across channels. They can’t tell if an interaction actually resolved the issue, or just ended the conversation.

- “First contact” gets defined differently across teams (call vs case vs chat session vs thread)

- “Resolved” can mean anything from “ticket closed” to “bot ended the chat”

- Repeat contacts show up later, often in a different channel, so the connection gets missed

2. Leaders optimize what the dashboards reward

When FCR is messy to measure, teams default to metrics that are easy to count and easy to improve fast: AHT, queue time, deflection, and containment.

That creates a quiet shift in priorities. Speed becomes the proxy for quality, even when customers are really asking for closure.

3. AI gets framed as a deflection project

A lot of AI rollouts start with the same goal: reduce agent load. AI can absolutely do that. But if success is defined as “fewer people reaching an agent,” you can increase deflection while FCR drops. The system calls it “resolved.” The customer calls back.

And expectations keep rising. IBM points to 71% of customers expecting personalized support, which raises the bar for first-touch accuracy. If the first contact feels generic or off, it doesn’t matter how fast it was.

How to correctly measure FCR in AI-powered contact centers

Out-of-the-box, system-reported FCR metrics (chat ended, ticket closed, bot contained) can hide problems in an AI-era journey. You can hit your “FCR” target while customers still churn because they feel deflected.

Here’s a modern scorecard that keeps “resolution” honest.

VOC-based FCR (the baseline that matters)

Count “resolved on first contact” only when the customer confirms resolution and confirms it took one contact.

Ask two questions right after the interaction (SMS, email, chat):

- Was your issue resolved during your most recent interaction? (Yes / No / Not sure)

- Including your most recent interaction, how many contacts did it take to resolve your issue? (1 / 2 / 3+)

Count “resolved at first contact” only when: Q1 = Yes and Q2 = 1.

Formula: VOC FCR = (# “Yes + 1” responses) ÷ (total survey responses)

Deflection vs resolution tracking

Track containment, but separate “didn’t reach an agent” from “issue resolved.”

Pair two numbers:

- Containment rate: % interactions that end in self-service (no human)

- Self-service resolution rate (SSR): % self-service interactions with no repeat contact within a defined window

SSR (practical):

- Pick a window (often 7–14 days, longer for slow-resolution intents like claims)

- SSR = 1 − (% that repeat for the same intent within the window)

Repeat-contact analysis by intent

This is where FCR actually gets fixed. Break repeat calls down by intent and channel so you can fix the root cause.

Start simple:

- Define “repeat contact” = same customer + same intent within X days

- Then calculate: Repeat rate by intent = (# customers who repeat for intent X) ÷ (total customers contacting for intent X)

Intents per contact (IPC)

IPC measures how often customers bring more than one “job to be done” in a single interaction. FCR can look fine on paper because you “resolved the primary intent,” but the customer leaves with a second (unhandled) need and contacts you again.

How to calculate (lightweight):

- Tag interactions with the number of intents handled (1, 2, 3+)

- IPC = (total intents) ÷ (total interactions)

So if 100 interactions include 130 total intents, IPC = 1.3.

Quick fix: add a closure check like, “Before you go, did we cover everything you came in for today?” This catches the second intent while the customer is still there, which is exactly what FCR is supposed to do.

Context-aware escalation quality

Measure how often handoffs are seamless. If the customer repeats information or the agent starts cold, that’s friction that drags down true resolution.

Two ways to measure it:

- Handoff completeness rate: % of escalations where the human receives intent + summary + key fields (account, product, case history)

- Repeat-yourself rate (VOC add-on): add one optional question: “Did you have to repeat information when you were transferred?” (Yes/No)

Even lightweight sampling here is enough to show whether your “hybrid” journey is actually hybrid or just a restart.

System-reported vs Customer-validated FCR comparison

What “good hybrid for FCR” actually looks like

Once you measure FCR from the customer’s perspective, the design requirements get clearer. The buyer’s mistake is framing this as automation vs agents. The better frame is resolution quality at scale.

McKinsey describes the contact center at a crossroads where AI changes the work, but doesn’t remove the need for humans. In many cases, the absolute number of interactions requiring human intervention can still grow as overall volume increases and complexity rises.

AI improves FCR when it does three things well: makes decisions safer, handoffs cleaner, and humans more effective on the cases that actually matter.

.png)

1. Real-time summarization and context retrieval (so the agent starts “warm”)

A big chunk of repeat contacts comes from missing or wrong context: the agent didn’t see the right policy constraint, the bot didn’t capture key details, or the case history was scattered across systems.

Example: Before a call, AI summarizes prior cases, recent transactions, and key variables (like policy type or risk factors) so the agent starts with full context. After the call, AI generates a clean disposition formatted for the CRM, so the next touch doesn’t restart from scratch.

.png)

2. Next Best Actions (so nobody “wings it” on high-stakes calls)

Most copilots are good at suggestions. FCR needs something stricter: guidance that keeps the interaction on a compliant, consistent resolution path.

Example: A customer disputes a credit search. AI summarizes the issue, then presents the best approach for each likely scenario, guiding the agent on what questions to ask and what step to take next.

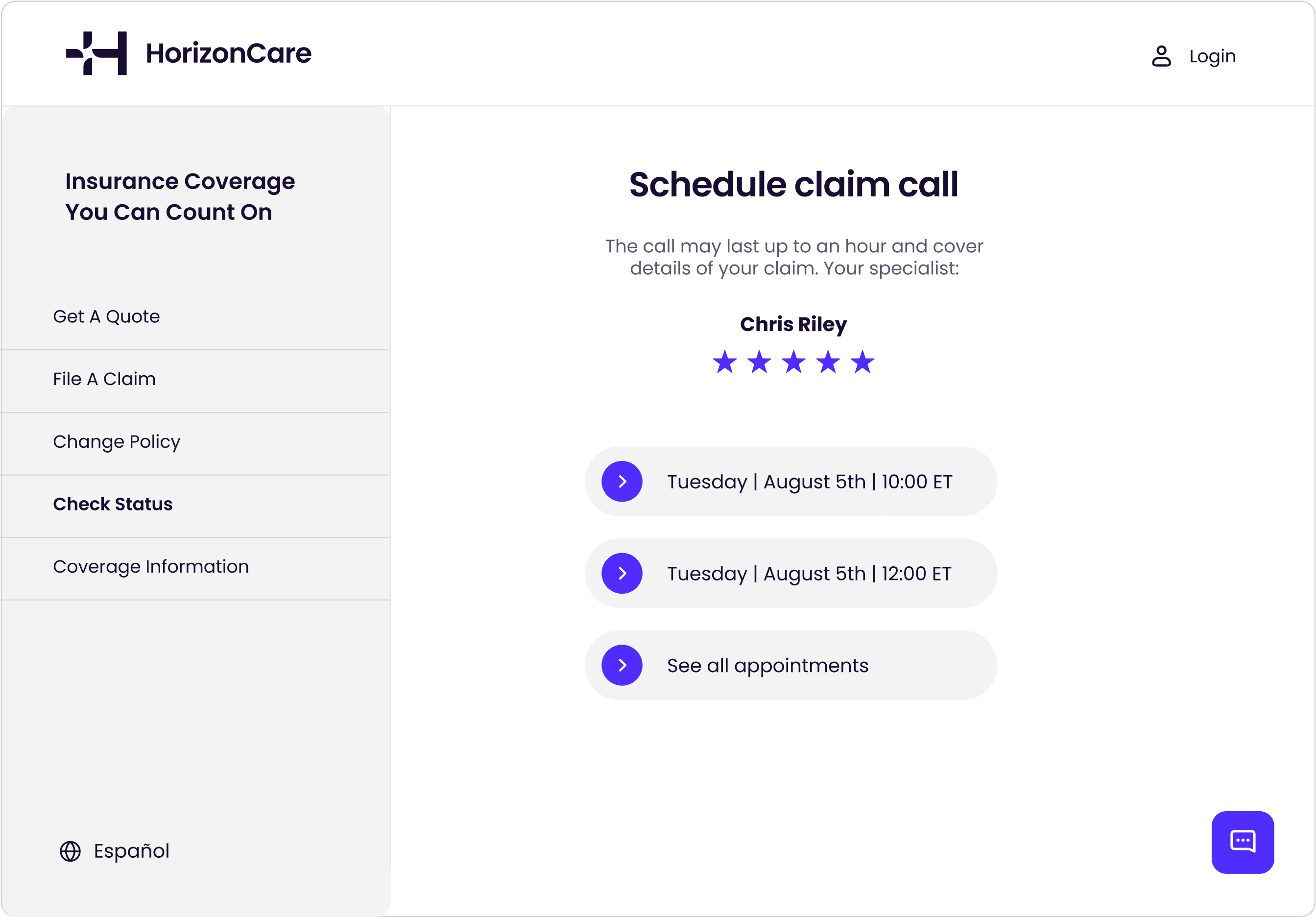

3. Qualified escalation that preserves trust

Automation breaks trust when it hangs on too long. For FCR, the best systems know when to answer, when to ask more questions, and when to escalate early with full context.

Example: A customer files an insurance claim. AI checks policy, billing, and fraud systems, detects a fraud signal, and auto-escalates to schedule a claim call with a specialist.

Transforming the workforce: from agents to advisors

As AI takes more Tier 1 work, the human queue changes. It gets smaller, but harder. That’s why your best lever isn’t more headcount. It’s better judgment per interaction.

McKinsey’s point lands here: as routine work shifts to AI and complexity limits pure efficiency gains, leaders have to realign talent toward higher-value work. In an FCR world, that “higher value” is closing messy issues in one touch.

Focus enablement on what directly moves FCR:

- Problem-solving + systems thinking

- Exception handling (when the “normal flow” doesn’t apply)

- Empathy + de-escalation

- Domain expertise tied to repeat-contact drivers

And make AI useful inside the workflow:

- Context-aware next-best actions

- Suggested phrasing for tense moments (without forcing scripts)

- Instant access to customer, policy, and product context

- Summaries and clean notes that reduce after-call gaps (which often become tomorrow’s repeat contact)

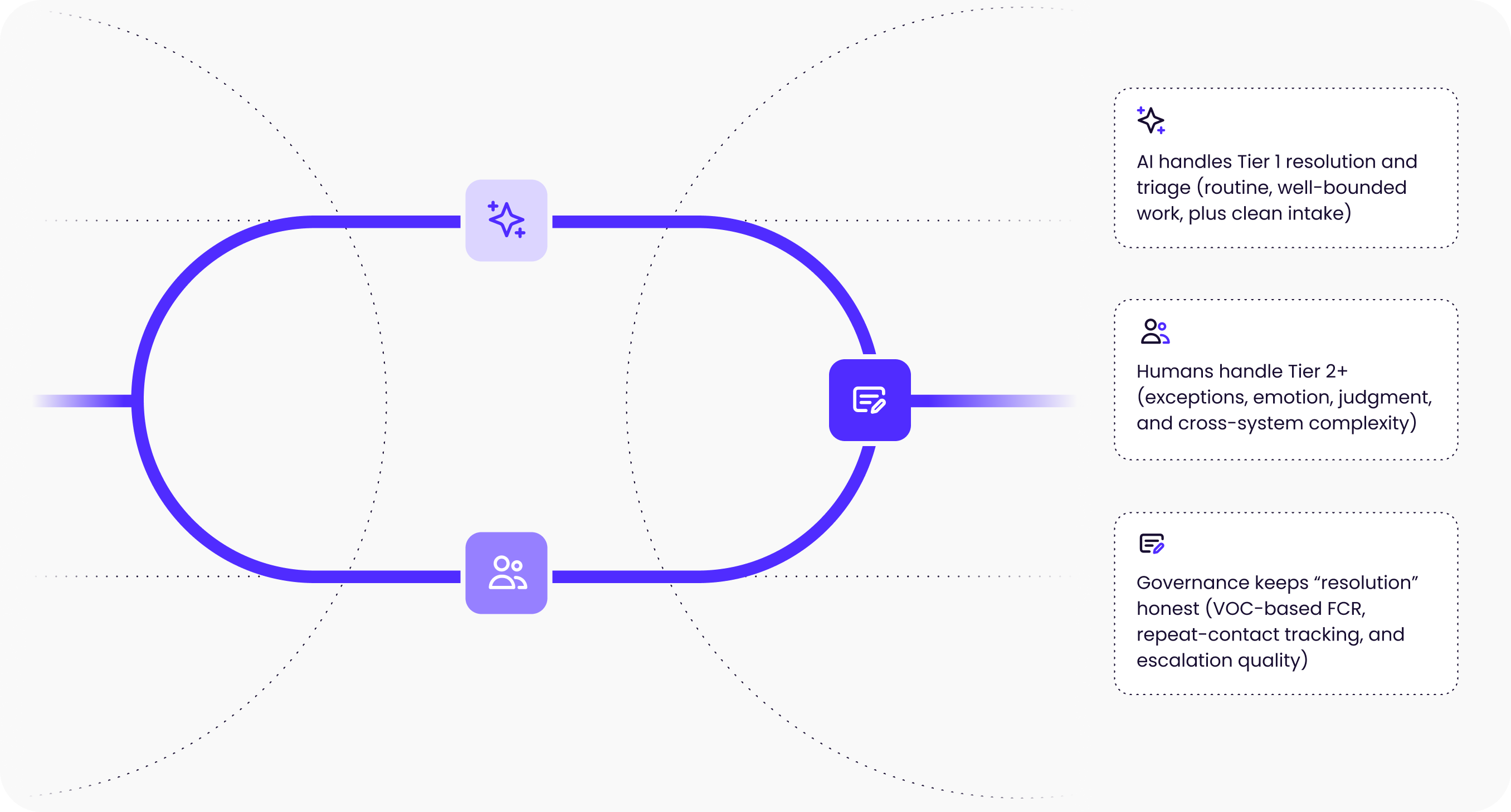

The future of contact centers: hybrid human-AI models for optimal FCR

McKinsey reinforces that the real challenge is finding the right mix of humans and AI, not racing to full automation. For FCR, that “mix” is the difference between customers getting closure and customers getting bounced.

In practice, that looks like:

- AI handles Tier 1 resolution and triage (routine, well-bounded work, plus clean intake)

- Humans handle Tier 2+ (exceptions, emotion, judgment, and cross-system complexity)

- Governance keeps “resolution” honest (VOC-based FCR, repeat-contact tracking, and escalation quality)

This matters for FCR because once AI takes the simple work, the remaining human contacts are disproportionately the ones most likely to fail the first time unless the agent is well-supported.

Frequently asked questions

What is First Contact Resolution (FCR)?

FCR means a customer’s issue is fully solved in the first interaction without transfers, callbacks, or follow-ups. The key is “stayed solved,” not “interaction ended.”

What AI use cases most improve FCR (not just containment)?

The highest-impact patterns are:

- Real-time context retrieval + summarization (warm starts)

- Context-aware next-best actions (consistent resolution paths)

- Qualified escalation with full context (no bot loops, no resets)

How does Zingtree help improve FCR?

Zingtree helps teams increase FCR by standardizing and governing complex resolution workflows, supporting AI-assisted guidance and context-rich handoffs so customers don’t get bounced or forced to repeat themselves.

What types of teams typically use Zingtree for FCR?

Teams with complex, high-stakes support workflows, especially where policies, compliance, and multiple systems make “right-first-time” resolution difficult (common in healthcare, insurance, and financial services).

What’s a realistic first step to improve FCR this quarter?

Start by measuring VOC-based FCR, then pick one high-repeat intent and fix it end-to-end: tighten intake, improve context access, add next-best-action guidance, and audit escalation quality.

.webp)

.svg)

.svg)

.svg)